READ MORE: What is Synthetic Media: The Ultimate Guide (Mark van Rijmenam)

From virtual celebrities and MetaHumans to deepfakes and voice clones, novel forms of “synthetic” media blur the distinction between physical and digital environments and will radically accelerate the process of content creation and delivery. With it comes important questions about privacy and ethical dilemmas.

An article by future tech strategist and entrepreneur Mark van Rijmenam runs through where synthetic media is today, where it might be going, and what pitfalls we should be aware of.

“We are entering a new age where more people will be exposed to synthetic media,” he says. It’s a mass social experiment, and we have no idea what the consequences of this medium might be. If we cannot predict or study its impact accurately, there is little hope of protecting ourselves against its dangers.”

But what is synthetic media? The definition given by van Rijmenam is “virtual media produced with the help of artificial intelligence (AI). It is characterized by a high degree of realism and immersiveness.” He continues, “Furthermore, synthetic media tends to be indistinguishable from other real-world media, making it very difficult for the user to tell apart from its artificial nature. It is possible to generate faces and places that don’t exist and even create a digital voice avatar that mimics human speech.”

Examples that exist right now include virtual celebrities like Lil Miquela, an online persona who does not exist in the real world, but has become one of the world’s most popular influencers on Instagram, with three million followers.

“She (it?) and other virtual influencers are becoming increasingly popular and will continue to do so,” says van Rijmenam.

“When it comes to deepfake issues, journalism cannot escape the fact that its old forms of reporting are under pressure due to the rise of digital information. Therefore, we need media literacy and verification to report on these deepfake videos and regarding worldwide disinformation or propaganda.”

Mark van Rijmenam

The ability for anyone to create their own avatar to popular the metaverse is being driven by Epic Games, which has a program called MetaHuman that does just that.

“MetaHuman Creator enables you to create fully rigged photorealistic digital humans in minutes, in real-time, for use in video games, virtual and augmented reality content, architectural visualizations, and more.”

Van Rijmenam himself says he is now “transitioning” to a MetaHuman character; a digital twin of himself. That’s an interesting choice of word given today’s controversies surrounding gender identity.

On the audio side, artificial voice technology, such as text-to-speech and voice cloning, has become very popular. Companies here include Respeecher, Voiseed and Resemble.ai, which allows you to clone your voice to create digital avatars and use them in movies. As described by van Rijmenam, Voiseed makes audio content more human with a voice interface that communicates in authentic, natural language using emotion and intellect.

Synthetic media tools allow for creating complex data visualizations, or even videos, using only a spreadsheet. Analysts and researchers often use these to present findings to a broader audience. Art directors also use it to mockup ideas before they bring them to life in development.

Synthetic music has the potential to generate sounds that are indistinguishable from a human-produced track.

“AI generates ever-shifting soundscapes for relaxation and focus, powers recommendation systems in streaming services, facilitates audio mixing and mastering, and creates rights-free music.”

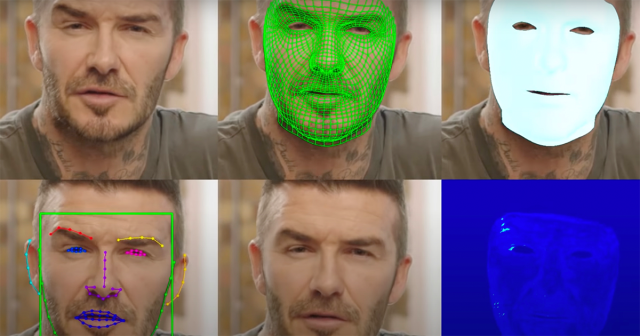

You can find AI-generated and copyright-free music using platforms like Icons8 and Evoke Music. Synthetic images are already being used for creating NFT art and generating realistic stock photos, while synthetic videos combine the worlds of photography and videography. Synthetic videos have taken on many forms, but one of the most popular types is deepfakes. These are face swaps, where one person’s face replaces another’s, or face reenactment in which the source actor controls the face of the target actor.

GANs

This is all made possible by advances in neural networks — or Generative Adversarial Networks (GANs), to use the jargon. Since GAN outputs look natural and indistinguishable from the original photos, they enable synthetic media that is difficult to distinguish from real media, particularly in computer vision and image processing applications.

“At the same time, advances in machine learning and deep learning have made it possible to train computer vision algorithms on large datasets of images,” van Rijmenam says. “As a result, today’s neural networks can see things in photos that humans can’t even detect with our own eyes.”

What is Synthetic Media Good For?

As with most AI technology, there are pluses and minus. The sheer volume of content required to build the worlds of the metaverse is going beyond all traditional computer assisted graphics construction. AI-driven media can be created rapidly. One only has to look at the impact of the fledgling text to picture AI DALL-E 2 to realize the benefits of this approach is going to have in kickstarting artistic creation in the 3D internet.

Such technology is only going to advance. Text-to-picture is evolving into text-to-video and eventually will lead to the creation of full-scale feature films. There have been several experiments in creating long form AI-composed content, but what distinguishes the next generation of synthetic media is that it will be indistinguishable from the real thing.

“It can create an illusion of authenticity,” says van Rijmenam. “This media type allows companies to connect with their audiences without paying actors or hiring professional photographers or videographers.”

“Synthetic media tends to be indistinguishable from other real-world media, making it very difficult for the user to tell apart from its artificial nature. It is possible to generate faces and places that don’t exist and even create a digital voice avatar that mimics human speech.”

Mark van Rijmenam

If certain jobs in media production are going to be automated out of existence a new type of job will emerge to take its place.

“The primary focus is interacting with AI to help it become more intelligent and capable,” says the futurist. “The skill of those who work with AI is important. If employees do not stay updated on technological advancements and improve their knowledge, they could be forced out of their jobs — no matter how hard they try to avoid automation.”

READ IT ON AMPLIFY: Deepfake AI: Broadcast Applications and Implications

READ IT ON AMPLIFY: Now We Have an AI That Can Mimic Iconic Film Directors

READ IT ON AMPLIFY: Can AI Imagery and Video Actually Be Good for the Creator Economy?

Deepfakes and The Law

As AI becomes ever more sophisticated, so do the ethical challenges AI faces. The biggest concern is ensuring that the algorithm will not engage in abusive or unethical practices towards humans and vice versa. Text and video can be created to generate misleading, false, or non-existent information, (fake news).

“When it comes to deepfake issues, journalism cannot escape the fact that its old forms of reporting are under pressure due to the rise of digital information. Therefore, we need media literacy and verification to report on these deepfake videos and regarding worldwide disinformation or propaganda.”

Personal rights and intellectual property laws will also be challenged. The legality of AI-generated counterfeit content is often unclear, making it difficult to know where your rights lie.

“Copyright law protects original intellectual property from copying,” van Rijmenam explains. “However, in an era of exponential growth, it will soon be unable to distinguish between ‘real’ and ‘fake’ text.”

Who should own the rights to a synthetic movie where all the actors are created digitally? The studio, or the creators of the algorithm that generated the characters? These questions are still to be explored and framed in law.

“Synthetic media will need to be regulated by law and policy, so we’ll need new rules to determine ownership and licensing,” he says.