TL;DR

- In 2024, deployment of content credentials will begin in earnest, spurred by new AI regulations in the EU and the United States.

- For the media companies, content credentials are a way to build trust at a time when rampant disinformation makes it easy for people to cry “fake” about anything they disagree with.

- The BBC and other big media organizations are making a push to use a content credentials system to allow Internet users to check the validity and provenance of images and videos.

READ MORE: Content Credentials Will Fight Deepfakes in the 2024 Elections (IEEE Spectrum)

In 2024, major national elections in some of the world’s biggest democracies, including India, the US and the UK, could be shaped by the alarmingly real threat of online disinformation.

To counter the threat of deepfake content, media companies are making moves to embed news-related video and still images with tags that display their provenance.

So-called data integrity, data dignity or digital provenance has been proposed for a while as the most effective means of combatting AI-manipulated disinformation published online.

Now, major news organizations, tech companies and social media networks appear on track to make concrete steps that would give audiences transparency about the video and stills they are viewing.

“Having your content be a beacon shining through the murk is really important,” Laura Ellis, head of technology forecasting at the BBC, told IEEE Spectrum, which has a thorough report on the latest developments.

The BBC is a member of the Coalition for Content Provenance and Authenticity (C2PA), an organization developing technical methods to document the origin and history of digital media files, both real and fake.

The C2PA group brings together the Adobe-led Content Authenticity Initiative and a media provenance effort called Project Origin, which released its initial standards for attaching cryptographically secure metadata to image and video files in 2021.

Since releasing the standards, the group has been further developing the open-source specifications and implementing them with leading media companies — the Canadian Broadcasting Corp. (CBC), and The New York Times are also C2PA members. Andrew Jenks, director of media provenance projects at Microsoft, is C2PA chair.

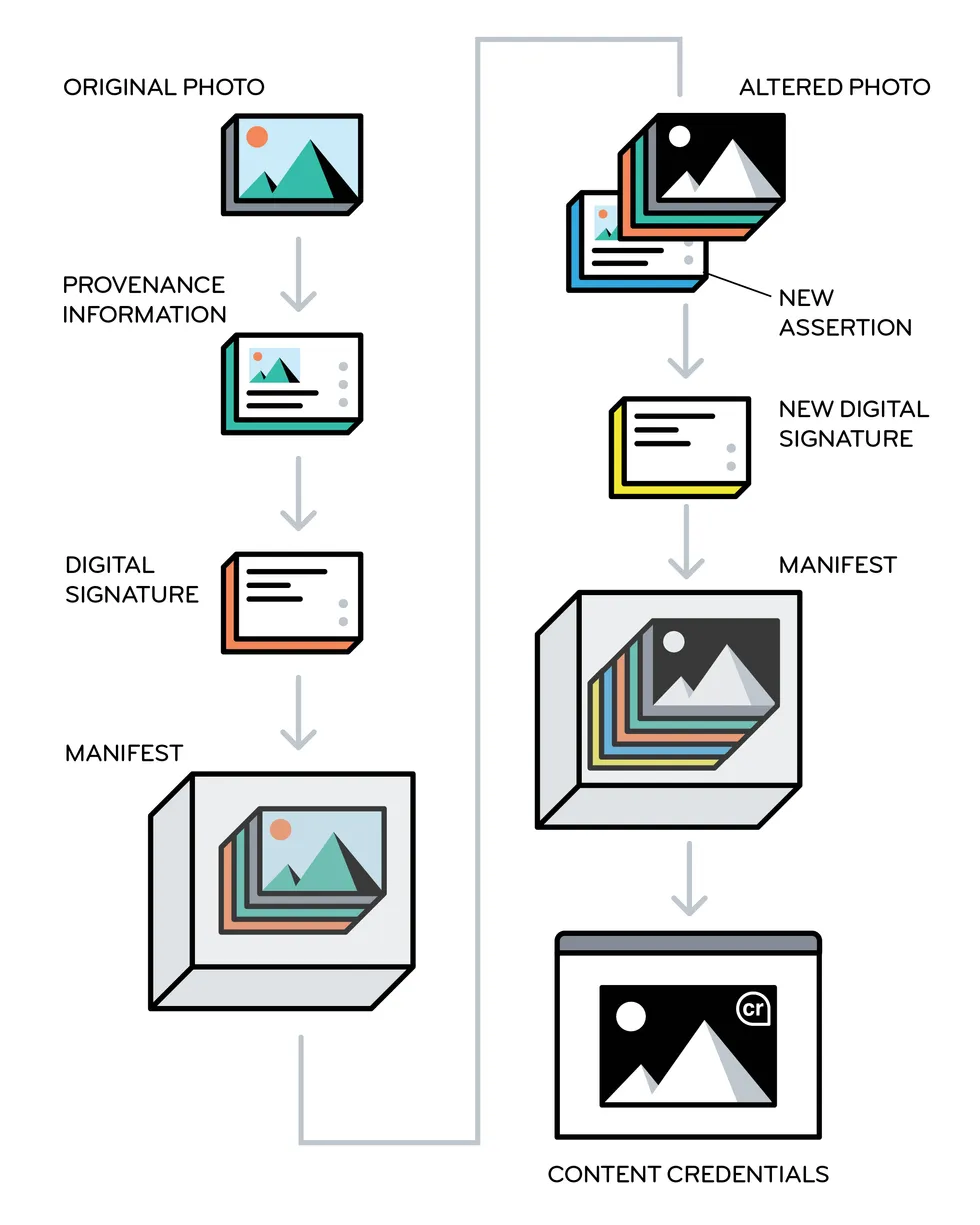

As detailed by the IEEE, images that have been authenticated by the C2PA system can include a little “cr” icon in the corner; users can click on it to see whatever information is available for that image — when and how the image was created, who first published it, what tools they used to alter it, how it was altered, and so on. However, viewers will see that information only if they’re using a social media platform or application that can read and display content-credential data.

READ MORE: Introducing Official Content Credentials Icon (C2PA)

The same system can be used by AI companies that make image- and video-generating tools; in that case, the synthetic media that’s been created would be labeled as such.

Adobe, for example, generates the relevant metadata for every image that’s created with its image-generating tool Firefly. Microsoft does the same with its Bing Image Creator.

While only a few companies are integrating content credentials so far, regulations are currently being crafted that will encourage the practice. The European Union’s AI Act, now being finalized, requires that synthetic content be labeled. And the Biden administration recently issued an executive order on AI that requires the Commerce Department to develop guidelines for both content authentication and labeling of synthetic content.

READ MORE: What You Need to Know About Biden’s Sweeping AI Order (IEEE Spectrum)

Bruce MacCormack, chair of Project Origin and a member of the C2PA steering committee, told IEEE that the big AI companies started down the path toward content credentials in mid-2023, when they signed voluntary commitments with the White House that included a pledge to watermark synthetic content. “They all agreed to do something,” he notes. “They didn’t agree to do the same thing. The executive order is the driving function to force everybody into the same space.”

Later this year, the CBC aims to debut a content-credentialing system that will be visible to any external viewer using a type of software that recognizes the metadata.

Tessa Sproule, the CBC’s director of metadata and information systems, explains: “It’s secure information that can grow through the content life cycle of a still image,” she says. “You stamp it at the input, and then as we manipulate the image through cropping in Photoshop, that information is also tracked.”

The BBC has also been running workshops with media organizations to talk about integrating content-credentialing systems. Recognizing that it may be hard for small publishers to adapt their workflows, Ellis’s group is also exploring the idea of “service centers” to which publishers could send their images for validation and certification; the images would be returned with cryptographically hashed metadata attesting to their authenticity.

But none of these efforts will have much impact if social media platforms don’t enact something similar. After all, as the IEEE notes, viewers are more likely to trust an image — validated or not — on the BBC than they are on Facebook.

Meta is reportedly very engaged on this issue down to the practicalities of the additional compute requirements needed for content watermarking.

“If you add a watermark to every piece of content on Facebook, will that make it have a lag that makes users sign off?” says Claire Leibowicz, head of AI and media integrity for the Partnership on AI.

However, she expects regulations to be the biggest catalyst for content-credential adoption.

If social media platforms are the end of the image-distribution pipeline, the cameras that record images and videos are the beginning. In October, Leica unveiled the first camera with built-in content credentials; C2PA member companies Nikon and Canon have also made prototype cameras that incorporate credentialing.

READ MORE: This Leica Camera Stops Deepfakes at the Shutter (IEEE Spectrum)

But hardware integration should be considered “a growth step,” says Microsoft’s Jenks. “In the best case, you start at the lens when you capture something, and you have this digital chain of trust that extends all the way to where something is consumed on a Web page,” he says. “But there’s still value in just doing that last mile.”

Synthetic images and videos, are “going to have an impact” in the 2024 US presidential election, warned Jenks. “Our goal is to mitigate that impact as much as possible.”