TL;DR

- The degree to which businesses and workers learn to trust their AI “colleagues” could play an important role in their business success.

- Mistrust of AI can come from business leaders, front-line workers, and consumers. Regardless of its origin, it can dampen enterprises’ AI enthusiasm and, in turn, adoption.

- AI will progress to exhibiting empathic emotional intelligence and then to general-purpose AI, which stands to deliver versatile systems that can learn and imitate a collection of previously uniquely human traits.

READ MORE: Tech Trends 2023 Prologue – A brief history of the future (Deloitte)

We spent the last 10 years trying to get machines to understand us better. It looks like the next decade might be more about innovations that help us understand machines, Deloitte predicts in its end-of-year Future Trends report.

Few business leaders doubt AI’s abilities to contribute to the team, and Deloitte says there’s plenty of evidence suggesting businesses that use AI pervasively throughout their operations perform at a higher level than those that don’t. But there’s a trust issue when implementing AI into the workforce. Specifically, enterprises have a hard time trusting AI with mission-critical tasks.

In short, if humans don’t trust machines or think they’re making the right call, it won’t be used.

“With AI tools increasingly standardized and commoditized, few businesses may realize true competitive gains from crafting a better algorithm,” the report states. “Instead, what will likely differentiate the truly AI-fueled enterprise from its competition will be how robustly it uses AI throughout its processes. The key element here, which has developed much slower than machine learning technology, is trust.”

Deloitte elaborates the argument. Computers were once seen as more or less infallible machines whose calculations were never wrong that simply processed discrete inputs into discrete outputs.

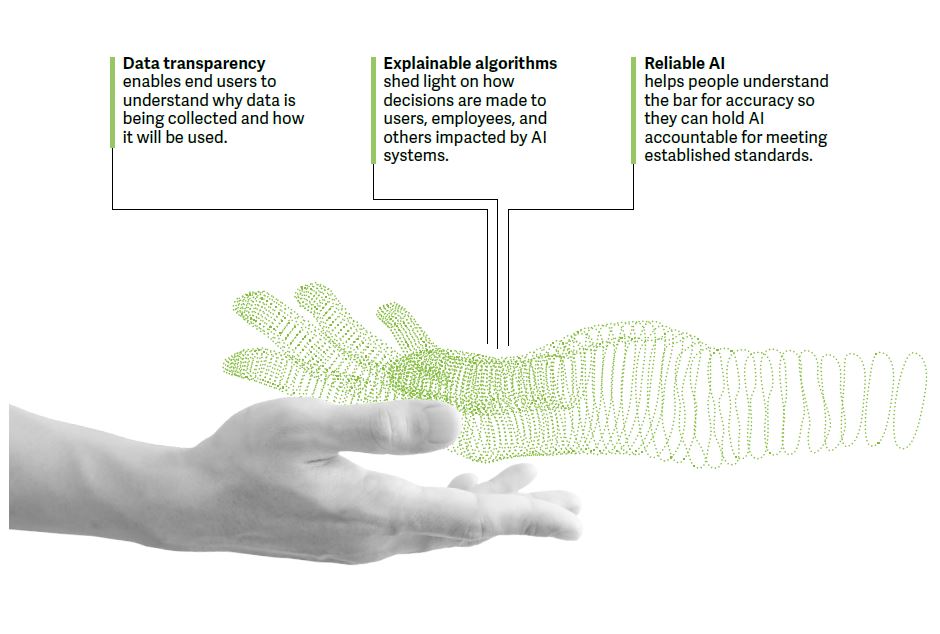

As algorithms increasingly shoulder probabilistic tasks such as object detection, speech recognition, and image and text generation, the real impact of AI applications may depend on how much their human colleagues understand and agree with what they’re doing.

“What may matter in the future is not who can craft the best algorithm, but rather who can use AI most effectively.”

In that case, developing processes that leverage AI in transparent and explainable ways will be key to spurring adoption.

One of the biggest clouds hanging over AI today is its black-box problem. Because of how certain algorithms train, it can be very difficult, if not impossible, to understand how they arrive at a recommendation.

“Asking workers to do something simply because the great and powerful algorithm behind the curtain says to is likely to lead to low levels of buy-in.”

How does this lack of trust manifest itself in the creative industries and its increasing use of generative AI tools like OpenAI’s DALL-E 2 image generator and GPT-3 text generator.

“In many cases, generative AI is proving itself in areas that were once thought to be automation-proof,” says Deloitte. “Even poets, painters, and priests are finding no job will be untouched by machines.”

That does not mean, however, that these jobs are going away, the report insists. “Even the most sophisticated AI applications today can’t match humans when it comes to purely creative tasks such as conceptualization, and we’re still a long way off from AI tools that can unseat humans in jobs in these areas.”

The prevailing approach to bringing in new AI tools is to position them as assistants, not competitors.

“Workers and companies that learn to team with AI and leverage the unique strengths of both AI and humans may find that we’re all better together,” says Deloitte. Think about the creative, connective capabilities of the human mind combined with AI’s talent for production work. We’re seeing this approach come to life in the emerging role of the prompt engineer.”

As enterprises consider adopting these capabilities, they could benefit from thinking about how users will interact with them and how that will impact trust.

“Think of deploying AI like onboarding a new team member,” the consultancy advises. “We know generally what makes for effective teams: openness, rapport, the ability to have honest discussions, and a willingness to accept feedback to improve performance. Implementing AI with this framework in mind may help the team view AI as a trusted copilot critic.”

For some businesses, the functionality offered by emerging AI tools could be game-changing. But a lack of trust could ultimately derail these ambitions.

Deloitte also addresses the longer term future of AI, which it characterizes as “exponential intelligence.”

“Affective AI — empathic emotional intelligence — will result in machines with personality and charm,” says Mike Bechtel, Deloitte’s chief futurist. “We’ll eventually be able to train mechanical minds with uniquely human data — the smile on a face, the twinkle in an eye, the pause in a voice — and teach them to discern and emulate human emotions. Or consider generative AI: creative intelligence that can write poetry, paint a picture, or score a soundtrack.”

After that, we may see the rise of general-purpose AI: intelligence that has evolved from simple math to polymath. Today’s AI is capable of single-tasking, good at playing chess or driving cars but unable to do both. General-purpose AI stands to deliver versatile systems that can learn and imitate a collection of previously uniquely human traits.

Next, Watch This

EXPLORING ARTIFICIAL INTELLIGENCE:

With nearly half of all media and media tech companies incorporating artificial intelligence into their operations or product lines, AI and machine learning tools are rapidly transforming content creation, delivery and consumption. Find out what you need to know with these essential insights curated from the NAB Amplify archives:

- AI Is Going Hard and It’s Going to Change Everything

- Thinking About AI (While AI Is Thinking About Everything)

- If AI Ethics Are So Important, Why Aren’t We Talking About Them?

- Superhumachine: The Debate at the Center of Deep Learning

- Deepfake AI: Broadcast Applications and Implications