READ MORE: The Future Today Institute’s 15th Anniversary Tech Trends Report (FTI)

Sensory clickbait, reporting from the metaverse, the rise of one-to-few networks, and the impact of natural language search are some of the multi-faceted ways changing how we will gather, publish and consume content over the next decade.

As outlined by The Future Today Institute’s Tech Trends Report, these are well-researched ideas fleshed out with concrete examples. Anyone interested in the future of news should find food for thought in this edited version.

AI in the Newsroom

So-called computer-directed reporting applies natural language processing (NLP) algorithms and artificial intelligence to automate many common tasks like curating a homepage and writing basic news stories. As these tools mature, FTI predicts they’ll let newsrooms reassign staff toward creating journalism — or pursue more aggressive cost-cutting.

This is already happening. Sophi, created by Canadian news group The Globe and Mail, is apparently trusted to do website curation as well (or better) than human editors. AP has automated the production of some corporate earnings stories for nearly a decade. In 2020, a UK team of five journalists at Reporters And Data And Robots produced more than 140,000 stories and 55 million words using AI.

As NLP algorithms grow more powerful, they’ll be useful for more editorial tasks, suggests FTI. Computer-directed reporting could let human journalists focus on higher-value reporting — or it could justify layoffs.

“News organizations face an ethical void when adapting journalism-producing AI systems,” the report warns. “How should editors detect bias in an algorithm? How should an error made by a natural language generator be corrected? Can AI really know what’s newsworthy? Should an AI system replace human judgment for information consumed by millions of people?”

The urgency for answering these questions is heightened because tech companies, not journalists, drive much of the innovation in this space.

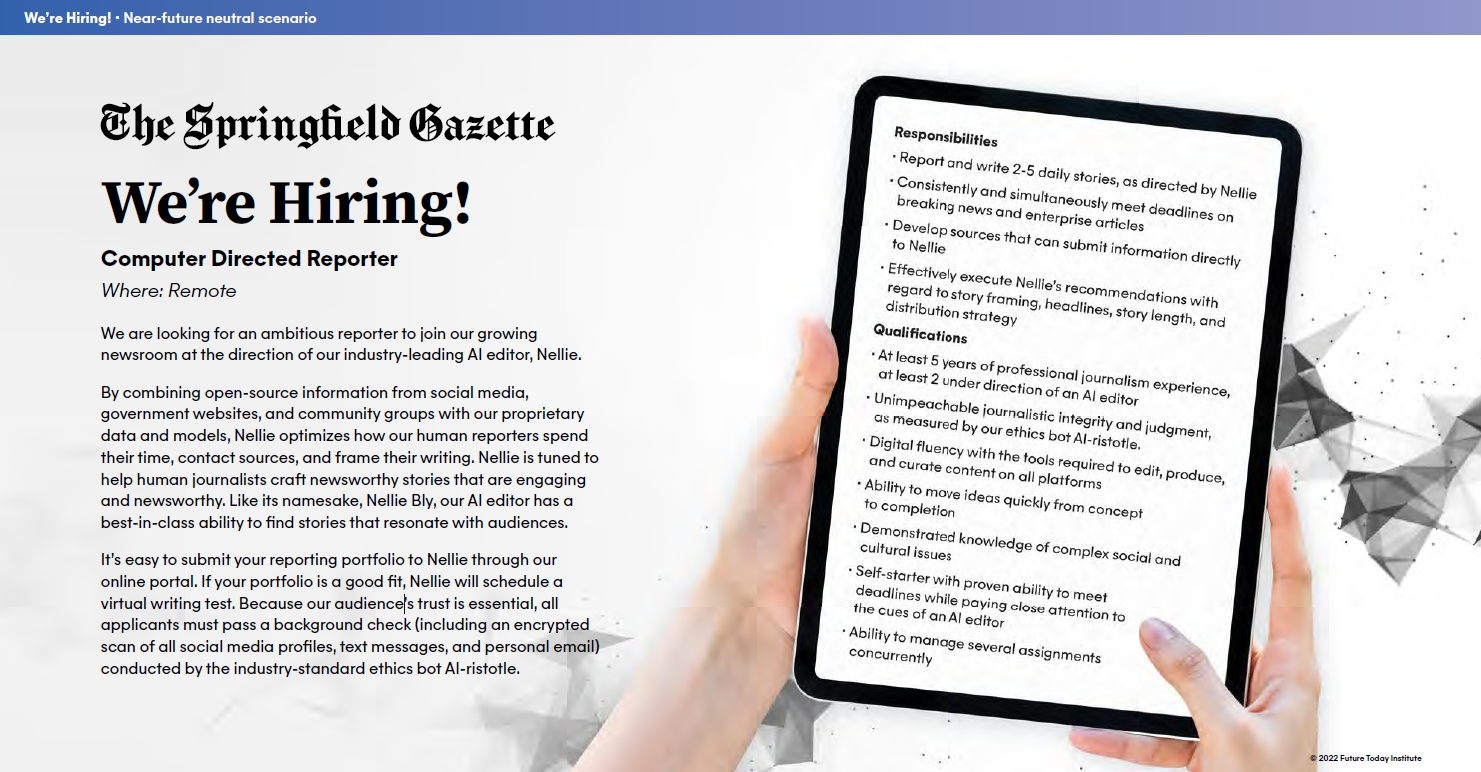

FTI even imagines a “near-future scenario” in which a news organization advertises “for an ambitious reporter to join our growing newsroom at the direction of our industry-leading AI editor, Nellie.”

By combining open-source information from social media, government websites, and community groups with our proprietary data and models, the mocked-up ad continues, “Nellie optimizes how our human reporters spend their time, contact sources, and frame their writing. Nellie is tuned to help human journalists craft newsworthy stories that are engaging and newsworthy.”

That should scare all of us.

News from the Metaverse

“While most news organizations today treat metaverse platforms as part of the gaming beat, we’re seeing signals that those spaces will be used to reach voters and organize political action. Journalists need to decide how they cover newsworthy events and avatars as people spend more time in virtual spaces.”

For example, during the New York City mayoral primary, an avatar of Andrew Yang made a campaign appearance in the Zepeto metaverse. The virtual event reached a small audience and was primarily covered on gaming websites, but raised important questions about how mainstream media would respond if Yang broke news on a virtual platform.

Other examples: The Norwegian government hosted a virtual Constitution Day parade in Minecraft in May 2020 that attracted 37,000 participants. Leading Norwegian newspaper Aftenposten wrote only a brief article about the event using statistics published by the Constitution Day organizers (i.e. was it verified by independent journalists). When US Representatives Alexandria Ocasio-Cortez and Ilhan Omar played Among Us live on Twitch, they reached more than five million people and generated headlines in mainstream media — but no sustained coverage of the political organizing and engagement happening appeared in non-traditional spaces.

This sort of outreach from celebs, politicians, and corporations is only going to grow in the metaverse as they can “hyper-target” interest groups based on specific digital communities.

Journalists need to start thinking about how they will cover entities that pander at scale: How will they report on contradictory statements online and in real life? Which version of a statement is authentic — the one made by an avatar or the one made standing behind a physical podium?

“News organizations should also start thinking about their long-term value proposition: If more of our life happens on platforms with built-in archiving and playback capabilities, does a journalist’s role need to shift away from telling people what happened?”

NAVIGATING THE METAVERSE:

The metaverse may be a wild frontier, but here at NAB Amplify we’ve got you covered! Hand-selected from our archives, here are some of the essential insights you’ll need to expand your knowledge base and confidently explore the new horizons ahead:

- What Is the Metaverse and Why Should You Care?

- Avatar to Web3: An A-Z Compendium of the Metaverse

- The Metaverse is Coming To Get You. Is That a Bad Thing?

- Don’t Expect the Metaverse to Happen Overnight

- A Framework for the Metaverse from Hardware to Hollywood and Everything in Between

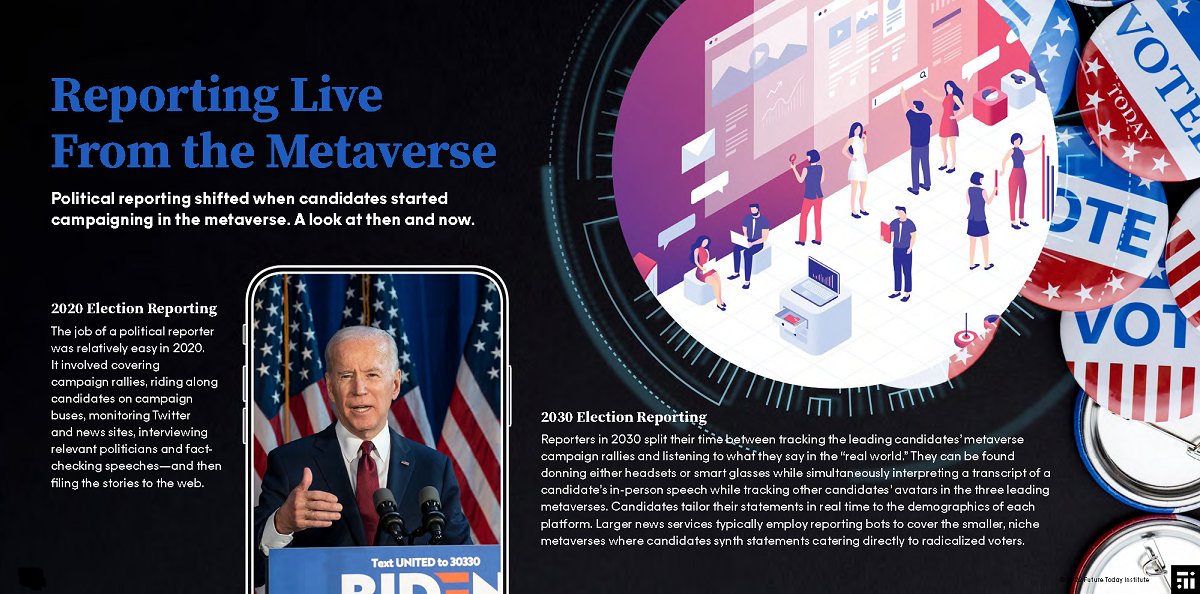

So imagine what the job of a political reporter might be a decade from now. At the time of the last US presidential election it was fraught enough with misinformation but generally involved covering campaign rallies, piggybacking on campaign buses, monitoring social and news sites, interviewing relevant politicians and factchecking speeches — and then filing the stories to the web.

In 2030, political hacks might split their time between tracking the leading candidates’ metaverse campaign rallies and listening to what they say in the real world.

“They can be found donning either headsets or smart glasses while simultaneously interpreting a transcript of a candidate’s in-person speech while tracking other candidates’ avatars in the three leading metaverses. Candidates tailor their statements in real time to the demographics of each platform. Larger news services will typically employ reporting bots to cover the smaller, niche metaverses where candidates synthesize statements catering directly to radicalized voters.”

Missing Archive for the Record

FTI highlights the increasing risk of digital frailty — that is the collective vulnerability we face from failing to consider the long-term consequences of losing digital archives to technical glitches, evolving file systems, or intentional design.

In just one example, Dallas lost more than 20 terabytes of police files during a server migration in March 2021 because the city had no formal system for managing data storage. The mistake — triggered by a single staffer in the IT department — jeopardized 17,494 family violence prosecutions, including 1,000 priority cases, according to the report.

“Digital frailty could deny future historians access to crucial primary sources about our time. While the Internet Archive and others try their best to create snapshots in time of the internet, those services struggle with dynamic sites that rely on JavaScript or those with highly personalized experiences like TikTok or Instagram.”

But creating a comprehensive, indelible record of all digital assets could be just as risky as archiving nothing.

“Young people create countless amounts of data daily, from posts shared on social networks to assignments posted to their school’s digital classroom. Do young people have the right to a blank slate when they reach adulthood, or should they be held accountable for every piece of data they create on the way to maturity?”

Come on “Feel” the News

News delivered through devices like smart glasses or headphones that directly integrate with a user’s senses is termed sensory journalism. This trend will enable new ways for journalism to resonate with readers, reckons FTI, but also raises the specter of “sensory clickbait” that is designed to manipulate a user’s emotions.

FTI reports a 2020 experiment, during which environmental researchers found that showing people a VR scenario about climate change could make them feel more empathetic about the ocean’s future. Other research has shown that VR experiences generate longer lasting emotional responses than text-based approaches to sharing perspectives.

The sort of technology that will enable sensory journalism is still very early days — but to FTI that means more time to consider the thorny questions this trend poses:

How will journalists ensure their work is accessible to all — including groups that tech companies frequently fail to prioritize (women, people with disabilities, and people of color)? What are the ethical guidelines for telling stories that integrate with a user’s senses?

“As the devices enabling sensory journalism become more immersive, journalists will need to examine how their work could manipulate people in unforeseen ways.”

Investigating the Algorithms

News organizations need specialized reporters with the technical skills to understand how technology operates in the world — and to explain it to a nontechnical audience.

Facebook whistleblower Frances Haugen’s leak of internal Facebook documents helped journalists at many news organizations better understand the social network’s algorithms. One story, for example, traced the weights that Facebook assigned different emoji reactions when deciding how to rank stories in users’ news feeds. That reporting helped bring specifics to the debate about how algorithmic distribution impacts society, but it will only get harder the more that AI seeps into all aspects of social and corporate life.

“Investigative skills to unpack technology are essential in newsrooms, in order to serve the fundamental journalist mandate of holding the powerful accountable,” says FTI.

The report also finds that major newsrooms are increasingly building teams that fuse multiple disciplines to create impactful reporting. Examples include teams at The Washington Post and The New York Times using crowdsourced footage to recreate newsworthy moments from multiple angles; tech-focused publications like The Markup have launched tools that make it easy for laypeople to see how they are tracked and targeted online.

AI Requires New Search Model

Advances in voice interfaces allow consumers to speak their search while image recognition algorithms let users search with their camera. These non-text searches can deliver more relevant results than traditional text-based queries, finds the report. This will be tremendously disruptive to businesses that rely on search traffic.

“Natural language search interfaces — whether deployed in AI assistants or as a feature in browser-based search engines — threaten the audience strategy for all kinds of media organizations.”

To maintain existing traffic levels, the report advises publishers to consider how their structured data models will support audio and image search. Because natural language queries tend to yield only one result, the risks to media outlets or e-commerce sites that fail to optimize for search are profound — especially as consumers become more comfortable making purchase decisions through voice interfaces.

EXPLORING ARTIFICIAL INTELLIGENCE:

With nearly half of all media and media tech companies incorporating Artificial Intelligence into their operations or product lines, AI and machine learning tools are rapidly transforming content creation, delivery and consumption. Find out what you need to know with these essential insights curated from the NAB Amplify archives:

- This Will Be Your 2032: Quantum Sensors, AI With Feeling, and Life Beyond Glass

- Learn How Data, AI and Automation Will Shape Your Future

- Where Are We With AI and ML in M&E?

- How Creativity and Data Are a Match Made in Hollywood/Heaven

- How to Process the Difference Between AI and Machine Learning

One-to-Few Networks

Low-cost tools to produce newsletters, podcasts, and niche experiences are enabling individuals to create personal media brands. These products can super-serve an audience, “but can also be a powerful vector for disinformation and misinformation,” because they don’t have the editorial safeguards that protect traditional media.

Leading examples include Substack, which committed $1 million to support independent creators wanting to launch local news newsletters in the US, and open-source alternative Ghost. Some one-to-few networks bring together multiple ways to connect their community, like Sidechannel, a Discord server for paid subscribers to several technology newsletters.

In lowering the barrier to entry for new competitors to legacy media technologies new one-to-few networks are a double-edge sword. The same technology that empowers neighborhood-level “Buy Nothing” groups to cut down on waste and unnecessary consumption can allow networks of white supremacists to plan acts of domestic terror.

CRUSHING IT IN THE CREATOR ECONOMY:

The cultural impact a creator has is already surpassing that of traditional media, but there’s still a stark imbalance of power between proprietary platforms and the creators who use them. Discover what it takes to stay ahead of the game with these fresh insights hand-picked from the NAB Amplify archives:

- The Developer’s Role in Building the Creator Economy Is More Important Than You Think

- How Social Platforms Are Attempting to Co-Opt the Creator Economy

- Now There’s a Creator Economy for Enterprise

- The Creator Economy Is in Crisis. Now Let’s Fix It. | Source: Li Jin

- Is the Creator Economy Really a Democratic Utopia Realized?

Free Speech Debate Moves Further Online

The First Amendment offers broad protections for what individuals and companies in the US can do, leaving plenty of room for debate about what they should do. That tension is opening up new paradigms for how content is regulated and moderated online.

Increased scrutiny has led mainstream platforms to take a more aggressive posture toward removing speech that violates their terms of service. Facebook removed more than 20 million posts containing COVID-19 misinformation from its app and Instagram between the start of the pandemic and June 2021. Even former President Trump’s Truth social network has lines for speech: Its terms of service give the site permission to remove posts that “disparage, tarnish, or otherwise harm, in our opinion, us and/or the site.”

In a Columbia Law Review article, lecturer Evelyn Douek argues that our approach to online speech has reached an inflection point: Instead of privileging the rights of individuals to post without restriction, “platforms are now firmly in the business of balancing societal interests and choosing between error costs on a systemic basis.”

Trust in all media has been falling globally since 2018, according to the Edelman Trust Barometer, which also found that the majority of people globally believe that journalists are purposefully trying to mislead them. People generally report higher levels of trust in the news they consume relative to the overall media, according to the 2021 Reuters Digital News Report. But the same report finds that consumers across the world report lower trust in information they get from search and social media. That’s a big problem for news organizations, because that’s where a big portion of their users — and a disproportionate share of their new users — come from.

Learn to Scrutinize the Facts

“Thriving democracies need citizens who can evaluate and access reliable information,” Stanford University professor Sam Wineburg has said, yet emerging technologies threaten to further confuse young people.

“Thanks to the internet, more information may be available today than at any point in human history. But not all of it is created equally,” FTI warns. “Teaching kids how to identify reliable information will prepare them for the workplace — and avoid a future without shared facts.”

Finland, for example, has made media literacy part of its national curriculum for nearly a decade. In the US, some states have passed legislation requiring students to learn the skills needed to critically analyze and interpret media messages. But 14 out of 50 is not a healthy ratio.

“We’ve already seen that disinformation and misinformation can have a profound impact on our society, whether through disrupting our elections or undermining trust in the medical establishment. If governments won’t step in to provide education, news organizations will need to fill the void. The inability for citizens to agree on shared facts undercuts their business model — and the basic fabric of our society.”